The Facebook team announced significant improvements to the AI it uses to describe photos posted on the platform, a technology designed for visually impaired users.

This system, designed by Facebook in 2016, was improved to provide faster and more accurate dynamics. But its latest update goes a step further, as it can offer more detailed descriptions of the photos.

Facebook improves its AI to help visually impaired users

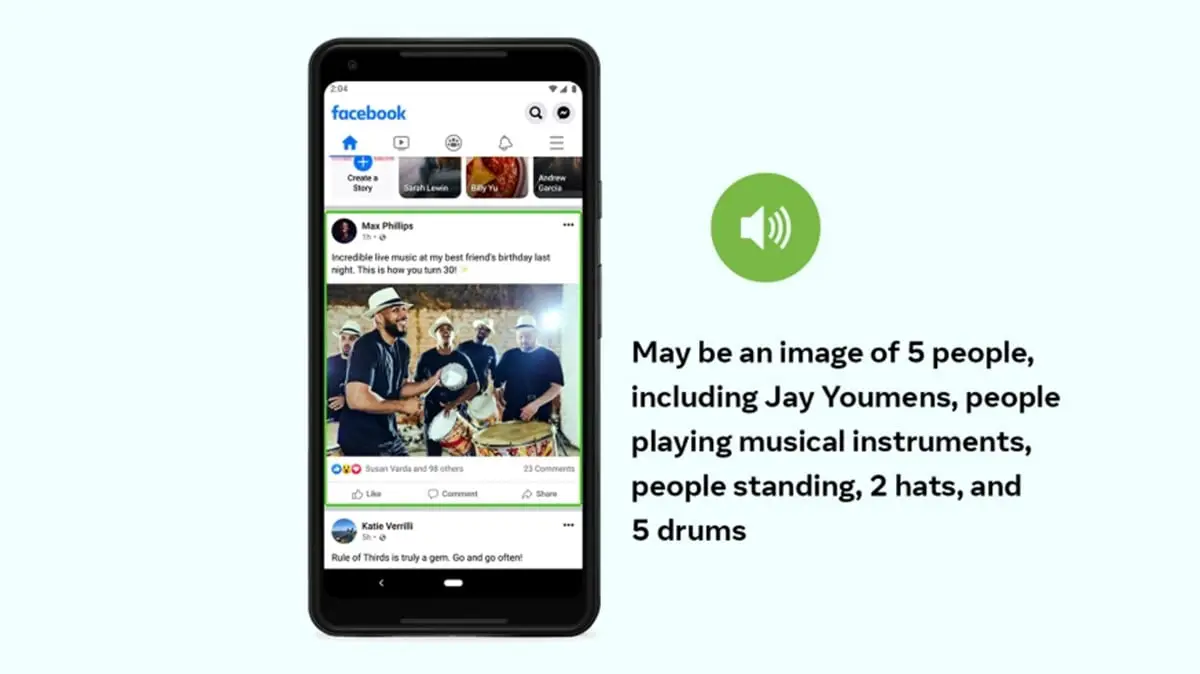

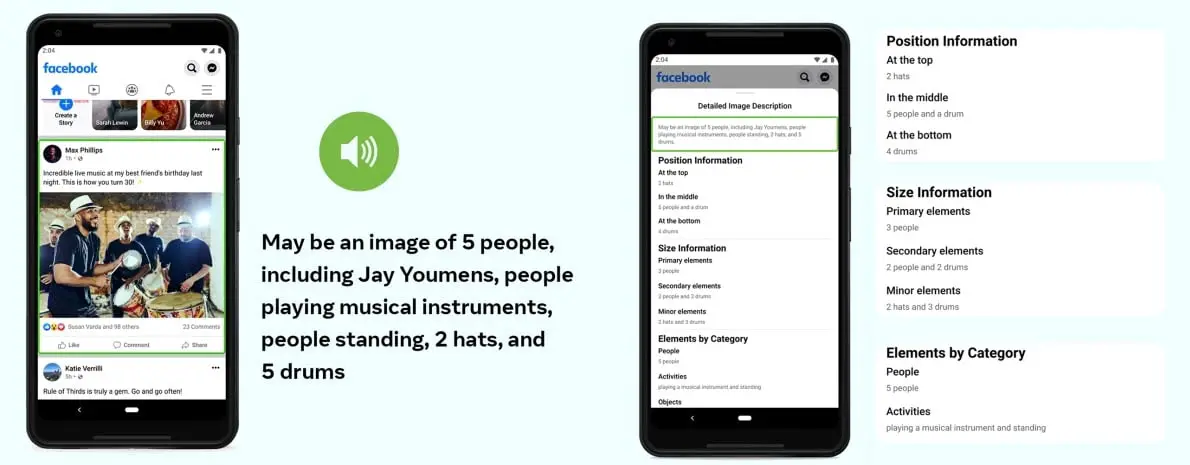

For every image posted on Facebook, the artificial intelligence automatically generates a subtitle that tries to describe the scene. Facebook doesn’t want this project to describe only individual elements of the image, but to convey the entire scene so users can understand the context and enjoy the post.

A goal that is present in this new version of the AI, can already recognize many more elements and offer a more detailed description of the scene. Not only can it distinguish if there are people or animals, but it can also recognize different types of activities, places, and even the position of the elements.

For example, in the image above, the artificial intelligence was able to recognize that there are 5 people wearing hats and playing drums. But not only that, it can also describe how the scene is set up and what elements are important. As you can see in the photo, all the information is classified in such a way that the whole context of the scene can be understood.

All of this information will help visually impaired people to understand what their friends are sharing in their photos. Of course, they may not want to get that information for every photo that appears in their feed, so Facebook will allow them to choose when they want to receive a more detailed description.