The technology sector is witnessing a new development that will revolutionize music produced with artificial intelligence: Stable Audio 2.0. Although I do not endorse this type of music, I think sharing this information with you is useful.

In addition, yesterday, we shared with you that famous musicians united against music produced with artificial intelligence. For detailed information, see our related article.

Stable Audio 2.0 has the potential to change the world of music production and sound design completely. So what does it offer? Let’s examine it closer.

Stable Audio 2.0: What’s new and features

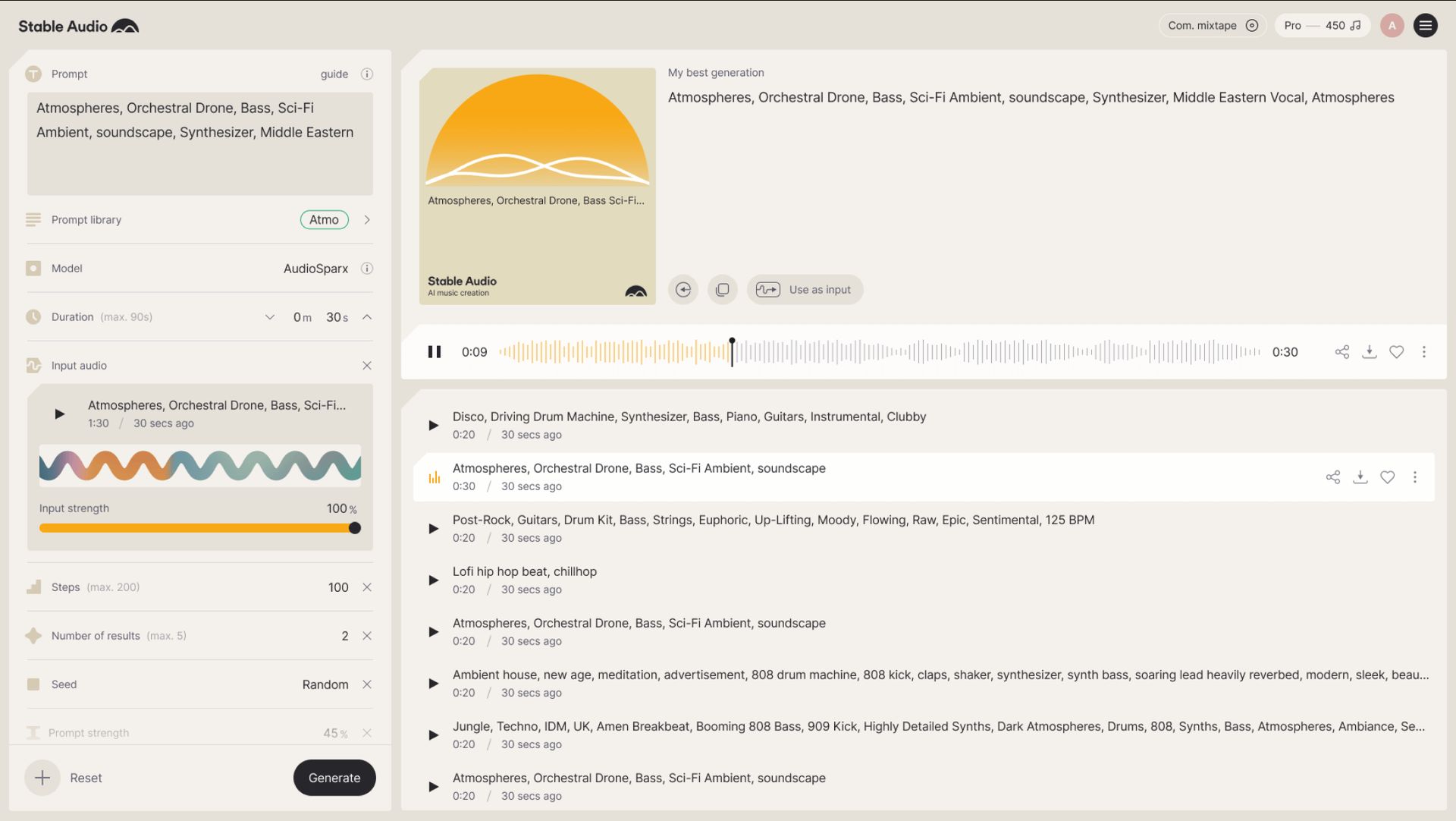

Full 44.1kHz stereo tracks: The model can produce high-quality, full tracks up to three minutes long with consistent musical structure.

Voice-to-voice reproduction: Stable Audio 2.0 is not just a text-to-speech conversion tool. Users can also upload audio samples and transform them into various melodies using natural language commands.

Stable Audio 2.0 features

Stable Audio 2.0 offers various features. You can see these features below:

Variations and sound effects

Stable Audio 2.0 is not limited to producing fixed and static sounds. It can produce various sounds and sound effects, from the timbre of a keyboard to the roar of a crowd or the chaos of a city street.

Here are some examples of the variations and sound effects that the model offers:

- Instrument sounds: Mimic the sounds of different instruments or create unique and hybrid sounds.

- Vocal effects: Robotise vocals, add reverb, or create harmonies

- Natural sounds: Reproduce natural sounds such as rain, birdsong, wind, or traffic noise

- Synthetic sounds: Create synthetic sounds such as science fiction effects, video game sounds, or robotic sounds

Style transfer

One of the most exciting features of Stable Audio 2.0 is the ability to transfer styles. This feature allows users to seamlessly change newly created or uploaded audio during production, matching the output’s theme to the project’s specific style and tone.

Here are some use cases for style transfer:

- Changing a melody to a different musical genre, for example, changing a rock song to a jazz track.

- Imitating the style of a particular artist or composer

- Adding an atmosphere or emotion to a sound effect

Advanced research

Stable Audio 2.0 uses a latent diffusion model architecture specifically designed to produce complete tracks with consistent structures. The model is trained on a dataset of over 800,000 audio files, including music, sound effects, single instrument sources from AudioSparx, and associated text metadata.

Some highlights of this advanced research include

- Latent diffusion modeling uses a series of steps to generate an audio waveform that starts as random noise and evolves over time into a realistic and coherent sound.

- The model was trained on a dataset containing various musical styles and sound effects, allowing it to produce more realistic and varied sounds.

Stable Audio 2.0 is a development that pushes the boundaries of AI-generated audio and redefines the future of music production. The model’s wide range of features gives musicians, sound designers, and artists more creative freedom than ever before.

The innovations in Stable Audio 2.0 are set to revolutionize the music production and sound design world.

Stable Audio 2.0 is set to revolutionize music production and sound design. To try the tool, users can visit this link.

Featured image credit: Stable Audio